Video deepfakes mean you can’t trust everything you see. Now, audio deepfakes might mean you can no longer trust your ears. Was that really the president declaring war on Canada? Is that really your dad on the phone asking for his email password?

Video deepfakes mean you can’t trust everything you see. Now, audio deepfakes might mean you can no longer trust your ears. Was that really the president declaring war on Canada? Is that really your dad on the phone asking for his email password?

Add another existential worry to the list of how our own hubris might inevitably destroy us. During the Reagan era, the only real technological risks were the threat of nuclear, chemical, and biological warfare.

In the following years, we’ve had the opportunity to obsess about nanotech’s gray goo and global pandemics. Now, we have deepfakes–people losing control over their likeness or voice.

What Is an Audio Deepfake?

Most of us have seen a video deepfake, in which deep-learning algorithms are used to replace one person with someone else’s likeness. The best are unnervingly realistic, and now it’s audio’s turn. An audio deepfake is when a “cloned” voice that is potentially indistinguishable from the real person’s is used to produce synthetic audio.

“It’s like Photoshop for voice,” said Zohaib Ahmed, CEO of Resemble AI, about his company’s voice-cloning technology.

However, bad Photoshop jobs are easily debunked. A security firm we spoke with said people usually only guess if an audio deepfake is real or fake with about 57 percent accuracy–no better than a coin flip.

Additionally, because so many voice recordings are of low-quality phone calls (or recorded in noisy locations), audio deepfakes can be made even more indistinguishable. The worse the sound quality, the harder it is to pick up those telltale signs that a voice isn’t real.

But why would anyone need a Photoshop for voices, anyway?

The Compelling Case for Synthetic Audio

There’s actually an enormous demand for synthetic audio. According to Ahmed, “the ROI is very immediate.”

This is particularly true when it comes to gaming. In the past, speech was the one component in a game that was impossible to create on-demand. Even in interactive titles with cinema-quality scenes rendered in real time, verbal interactions with nonplaying characters are always essentially static.

Now, though, technology has caught up. Studios have the potential to clone an actor’s voice and use text-to-speech engines so characters can say anything in real time.

There are also more traditional uses in advertising, and tech and customer support. Here, a voice that sounds authentically human and responds personally and contextually without human input is what’s important.

Voice-cloning companies are also excited about medical applications. Of course, voice replacement is nothing new in medicine–Stephen Hawking famously used a robotic synthesized voice after losing his own in 1985. However, modern voice cloning promises something even better.

In 2008, synthetic voice company, CereProc, gave late film critic, Roger Ebert, his voice back after cancer took it away. CereProc had published a web page that allowed people to type messages that would then be spoken in the voice of former President George Bush.

“Ebert saw that and thought, ‘well, if they could copy Bush’s voice, they should be able to copy mine,'” said Matthew Aylett, CereProc’s chief scientific officer. Ebert then asked the company to create a replacement voice, which they did by processing a large library of voice recordings.

“It was one of the first times anyone had ever done that and it was a real success,” Aylett said.

In recent years, a number of companies (including CereProc) have worked with the ALS Association on Project Revoice to provide synthetic voices to those who suffer from ALS.

How Synthetic Audio Works

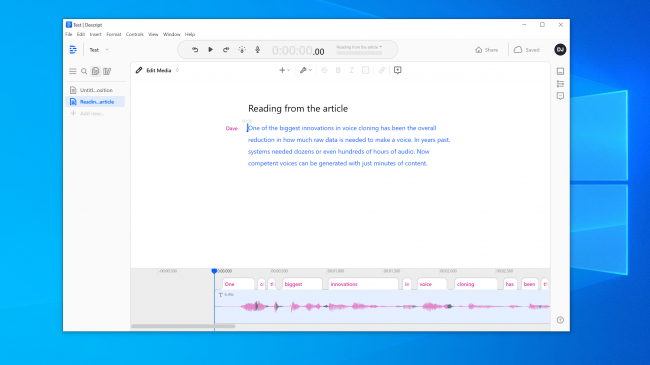

Voice cloning is having a moment right now, and a slew of companies are developing tools. Resemble AI and Descript have online demos anyone can try for free. You just record the phrases that appear onscreen and, in just a few minutes, a model of your voice is created.

You can thank AI–specifically, deep-learning algorithms–for being able to match recorded speech to text to understand the component phonemes that make up your voice. It then uses the resulting linguistic building blocks to approximate words it hasn’t heard you speak.

The basic technology has been around for a while, but as Aylett pointed out, it required some help.

“Copying voice was a bit like making pastry,” he said. “It was kind of hard to do and there were various ways you had to tweak it by hand to get it to work.”

Developers needed enormous quantities of recorded voice data to get passable results. Then, a few years ago, the floodgates opened. Research in the field of computer vision proved to be critical. Scientists developed generative adversarial networks (GANs), which could, for the first time, extrapolate and make predictions based on existing data.

“Instead of a computer seeing a picture of a horse and saying ‘this is a horse,’ my model could now make a horse into a zebra,” said Aylett. “So, the explosion in speech synthesis now is thanks to the academic work from computer vision.”

One of the biggest innovations in voice cloning has been the overall reduction in how much raw data is needed to create a voice. In the past, systems needed dozens or even hundreds of hours of audio. Now, however, competent voices can be generated from just minutes of content.

The Existential Fear of Not Trusting Anything

This technology, along with nuclear power, nanotech, 3D printing, and CRISPR, is simultaneously thrilling and terrifying. After all, there have already been cases in the news of people being duped by voice clones. In 2019, a company in the U.K. claimed it was tricked by an audio deepfake phone call into wiring money to criminals.

You don’t have to go far to find surprisingly convincing audio fakes, either. YouTube channel Vocal Synthesis features well-known people saying things they never said, like George W. Bush reading “In Da Club” by 50 Cent. It’s spot on.

Elsewhere on YouTube, you can hear a flock of ex-Presidents, including Obama, Clinton, and Reagan, rapping NWA. The music and background sounds help disguise some of the obvious robotic glitchiness, but even in this imperfect state, the potential is obvious.

We experimented with the tools on Resemble AI and Descript and created voice clone. Descript uses a voice-cloning engine that was originally called Lyrebird and was particularly impressive. We were shocked at the quality. Hearing your own voice say things you know you’ve never said is unnerving.

There’s definitely a robotic quality to the speech, but on a casual listen, most people would have no reason to think it was a fake.

We had even higher hopes for Resemble AI. It gives you the tools to create a conversation with multiple voices and vary the expressiveness, emotion, and pacing of the dialog. However, we didn’t think the voice model captured the essential qualities of the voice we used. In fact, it was unlikely to fool anyone.

A Resemble AI rep told us “most people are blown away by the results if they do it correctly.” We built a voice model twice with similar results. So, evidently, it’s not always easy to make a voice clone you can use to pull off a digital heist.

Even so, Lyrebird (which is now part of Descript) founder, Kundan Kumar, feels we’ve already passed that threshold.

“For a small percentage of cases, it is already there,” Kumar said. “If I use synthetic audio to change a few words in a speech, it’s already so good that you will have a hard time knowing what changed.”

We can also assume this technology will only get better with time. Systems will need less audio to create a model, and faster processors will be able to build the model in real time. Smarter AI will learn how to add more convincing human-like cadence and emphasis on speech without having an example to work from.

Which means we might be creeping closer to the widespread availability of effortless voice cloning.

The Ethics of Pandora’s Box

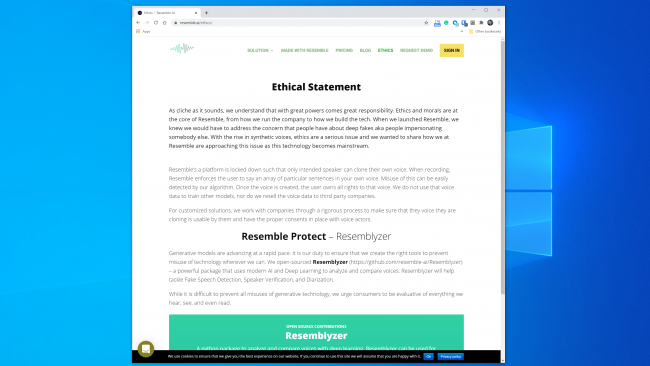

Most companies working in this space seem poised to handle the technology in a safe, responsible way. Resemble AI, for example, has an entire “Ethics” section on its website, and the following excerpt is encouraging:

“We work with companies through a rigorous process to make sure that the voice they are cloning is usable by them and have the proper consents in place with voice actors.”

Likewise, Kumar said Lyrebird was concerned about misuse from the start. That’s why now, as a part of Descript, it only allows people to clone their own voice. In fact, both Resemble and Descript require that people record their samples live to prevent nonconsensual voice-cloning.

It’s heartening that the major commercial players have imposed some ethical guidelines. However, it’s important to remember these companies aren’t gatekeepers of this technology. There are a number of open-source tools already in the wild, for which there are no rules. According to Henry Ajder, head of threat intelligence at Deeptrace, you also don’t need advanced coding knowledge to misuse it.

“A lot of the progress in the space has come through collaborative work in places like GitHub, using open-source implementations of previously published academic papers,” Ajder said. “It can be used by anyone who’s got moderate proficiency in coding.”

Security Pros Have Seen All This Before

Criminals have tried to steal money by phone long before voice cloning was possible, and security experts have always been on call to detect and prevent it. Security company Pindrop tries to stop bank fraud by verifying if a caller is who he or she claims to be from the audio. In 2019 alone, Pindrop claims to have analyzed 1.2 billion voice interactions and prevented about $470 million in fraud attempts.

Before voice cloning, fraudsters tried a number of other techniques. The simplest was just calling from elsewhere with personal info about the mark.

“Our acoustic signature allows us to determine that a call is actually coming from a Skype phone in Nigeria because of the sound characteristics,” said Pindrop CEO, Vijay Balasubramaniyan. “Then, we can compare that knowing the customer uses an AT&T phone in Atlanta.”

Some criminals have also made careers out of using background sounds to throw off banking reps.

“There’s a fraudster we called Chicken Man who always had roosters going in the background,” said Balasubramaniyan. “And there is one lady who used a baby crying in the background to essentially convince the call center agents, that ‘hey, I am going through a tough time’ to get sympathy.”

And then there are the male criminals who go after women’s bank accounts.

“They use technology to increase the frequency of their voice, to sound more feminine,” Balasubramaniyan explained. These can be successful, but “occasionally, the software messes up and they sound like Alvin and the Chipmunks.”

Of course, voice cloning is just the latest development in this ever-escalating war. Security firms have already caught fraudsters using synthetic audio in at least one spearfishing attack.

“With the right target, the payout can be massive,” Balasubramaniyan said. “So, it makes sense to dedicate the time to create a synthesized voice of the right individual.”

Can Anyone Tell If a Voice Is Fake?

When it comes to recognizing if a voice has been faked, there’s both good and bad news. The bad is that voice clones are getting better every day. Deep-learning systems are getting smarter and making more authentic voices that require less audio to create.

As you can tell from this clip of President Obama telling MC Ren to take the stand, we’ve also already gotten to the point where a high-fidelity, carefully constructed voice model can sound pretty convincing to the human ear.

The longer a sound clip is, the more likely you are to notice there’s something amiss. For shorter clips, though, you might not notice it’s synthetic–especially if you have no reason to question its legitimacy.

The clearer the sound quality, the easier it is to notice signs of an audio deepfake. If someone is speaking directly into a studio-quality microphone, you’ll be able to listen closely. But a poor-quality phone call recording or a conversation captured on a handheld device in a noisy parking garage will be much harder to evaluate.

The good news is, even if humans have trouble separating real from fake, computers don’t have the same limitations. Fortunately, voice verification tools already exist. Pindrop has one that pits deep-learning systems against one another. It uses both to discover if an audio sample is the person it’s supposed to be. However, it also examines if a human can even make all the sounds in the sample.

Depending on the quality of the audio, every second of speech contains between 8,000-50,000 data samples that can be analyzed.

“The things that we’re typically looking for are constraints on speech due to human evolution,” explained Balasubramaniyan.

For example, two vocal sounds have a minimum possible separation from one another. This is because it isn’t physically possible to say them any faster due to the speed with which the muscles in your mouth and vocal cords can reconfigure themselves.

“When we look at synthesized audio,” Balasubramaniyan said, “we sometimes see things and say, ‘this could never have been generated by a human because the only person who could have generated this needs to have a seven-foot-long neck.”

There’s also a class of sound called “fricatives.” They’re formed when air passes through a narrow constriction in your throat when you pronounce letters like f, s, v, and z. Fricatives are especially hard for deep-learning systems to master because the software has trouble differentiating them from noise.

So, at least for now, voice-cloning software is stumbled by the fact that humans are bags of meat that flow air through holes in their body to talk.

“I keep joking that deepfakes are very whiney,” said Balasubramaniyan. He explained that it’s very hard for algorithms to distinguish the ends of words from background noise in a recording. This results in many voice models with speech that trails off more than humans do.

“When an algorithm sees this happening a lot,” Balasubramaniyan said, “statistically, it becomes more confident it’s audio that’s been generated as opposed to human.”

Resemble AI is also tackling the detection problem head-on with the Resemblyzer, an open-source deep-learning tool available on GitHub. It can detect fake voices and perform speaker verification.

It Takes Vigilance

It’s always difficult to guess what the future might hold, but this technology will almost certainly only get better. Also, anyone could potentially be a victim–not just high-profile individuals, like elected officials or banking CEOs.

“I think we’re on the brink of the first audio breach where people’s voices get stolen,” Balasubramaniyan predicted.

At the moment, though, the real-world risk from audio deepfakes is low. There are already tools that appear to do a pretty good job of detecting synthetic video.

Plus, most people aren’t at risk of an attack. According to Ajder, the main commercial players “are working on bespoke solutions for specific clients, and most have fairly good ethics guidelines as to who they would and would not work with.”

The real threat lies ahead, though, as Ajder went on to explain:

“Pandora’s Box will be people cobbling together open-source implementations of the technology into increasingly user-friendly, accessible apps or services that don’t have that kind of ethical layer of scrutiny that commercial solutions do at the moment.”

This is probably inevitable, but security companies are already rolling fake audio detection into their toolkits. Still, staying safe requires vigilance.

“We’ve done this in other security areas,” said Ajder. “A lot of organizations spend a lot of time trying to understand what’s the next zero-day vulnerability, for example. Synthetic audio is simply the next frontier.”

Comments